Feihu Zhang Longquan Dai

Shiming Xiang Xiaopeng Zhang

|

Abstract

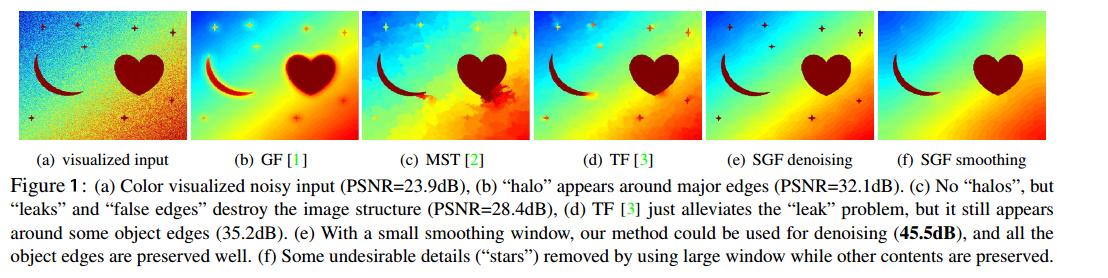

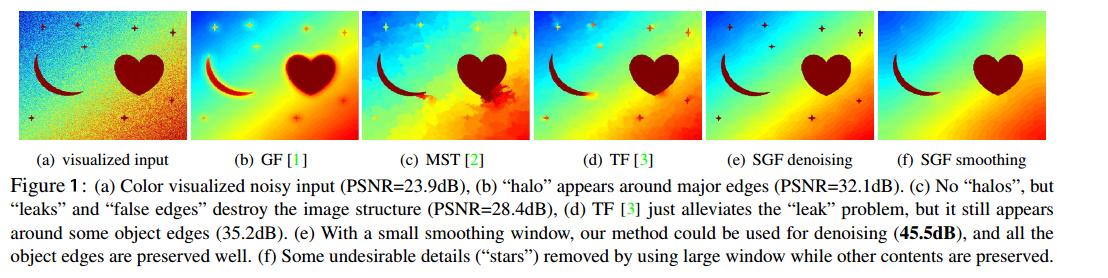

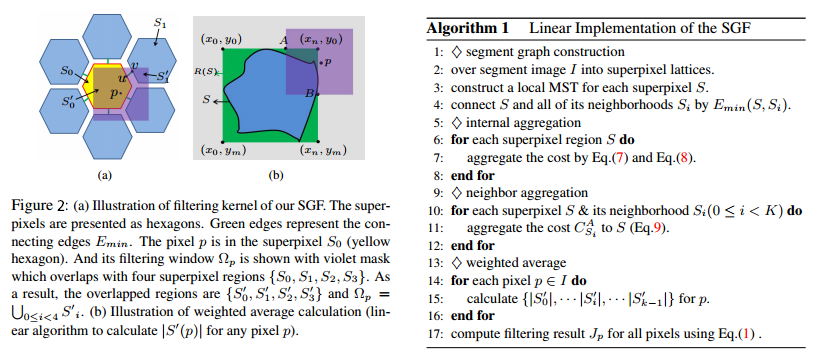

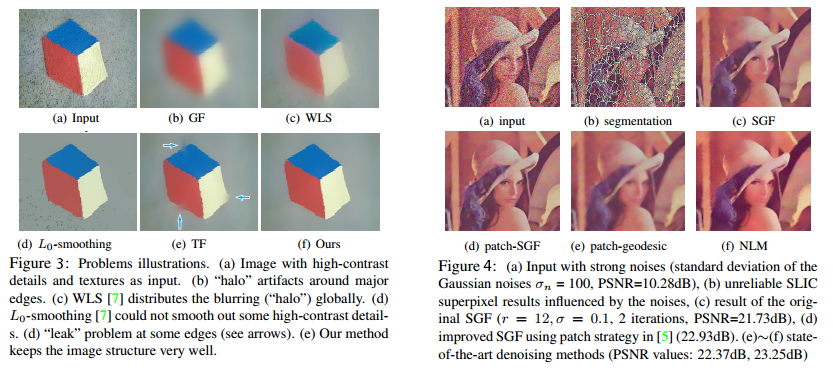

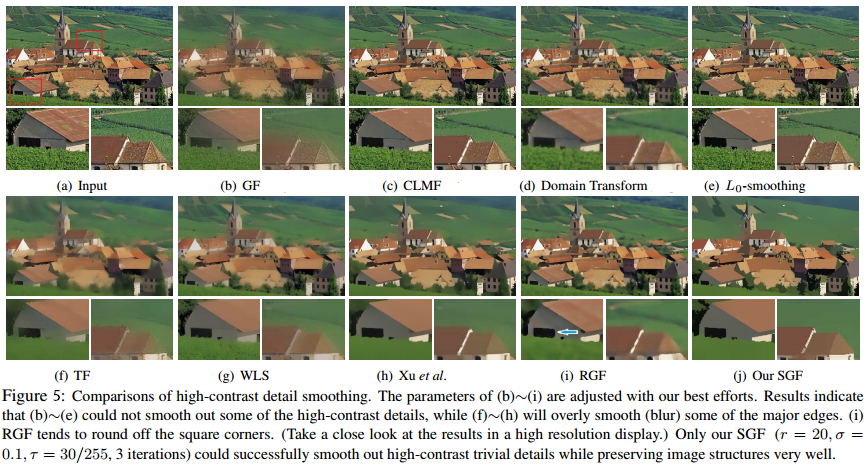

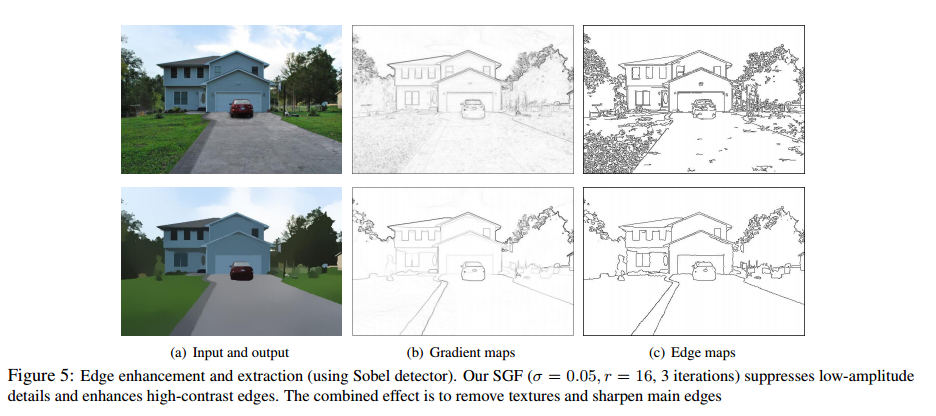

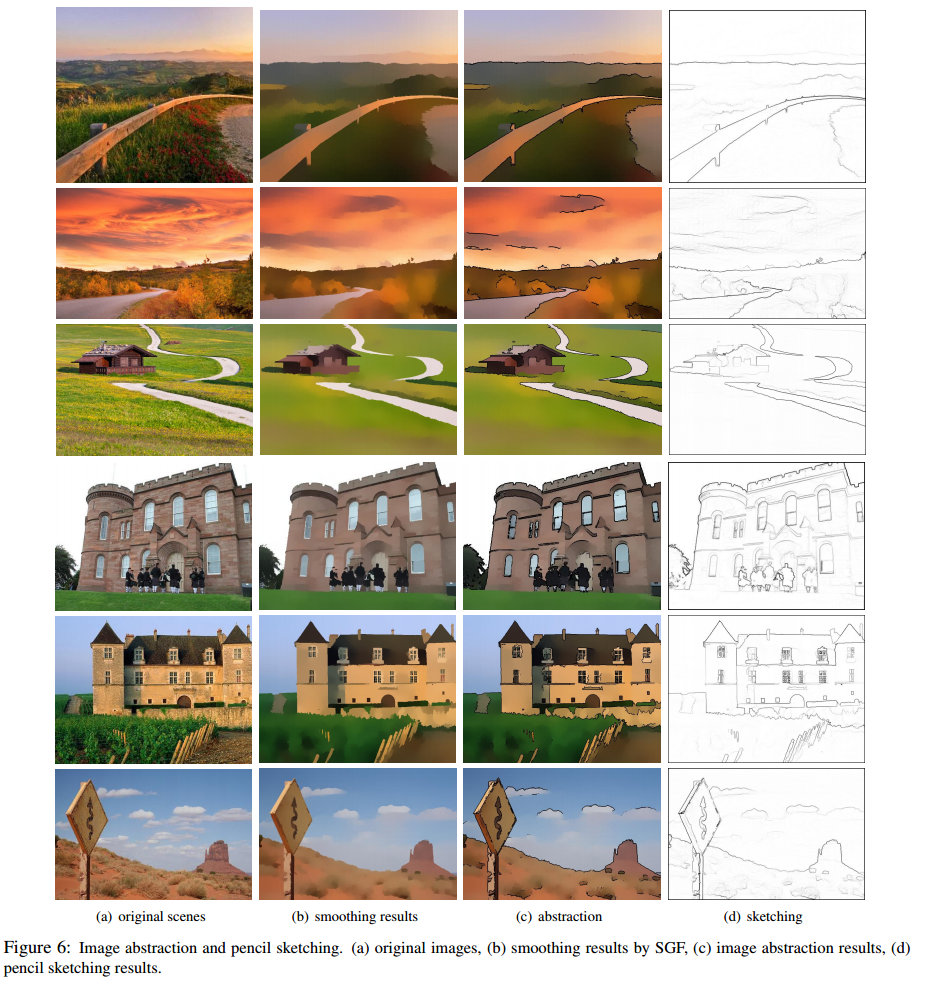

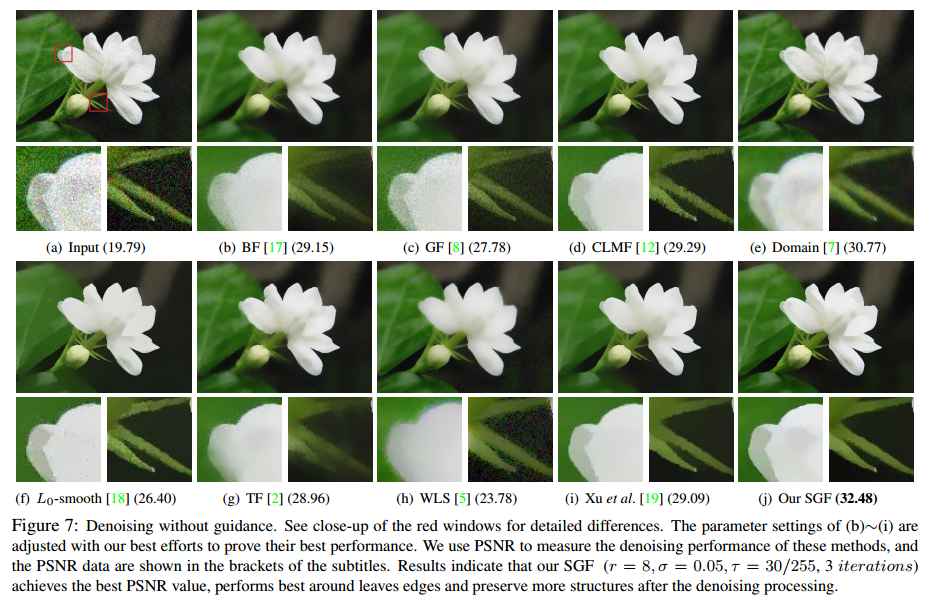

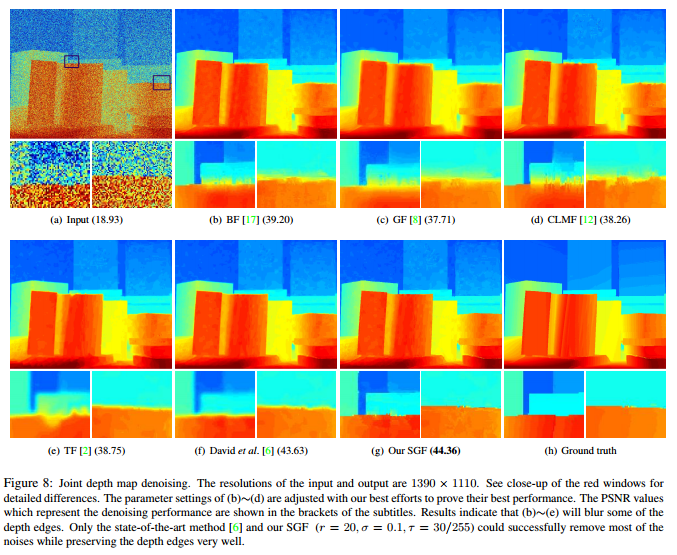

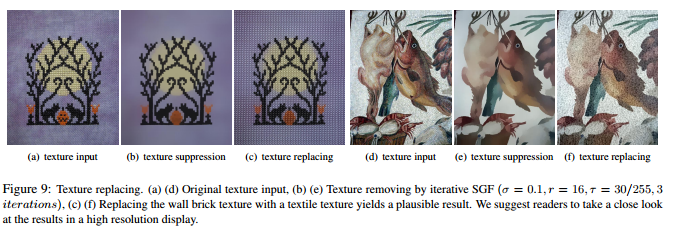

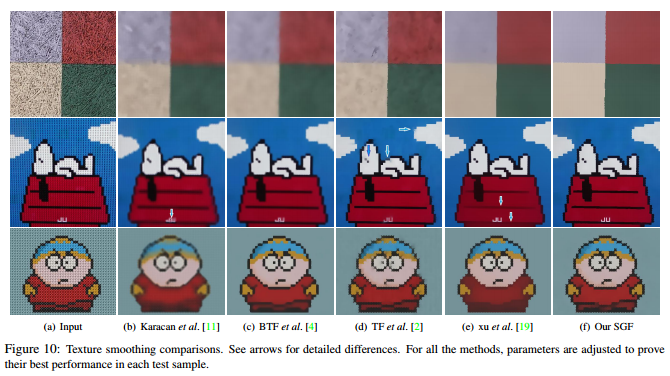

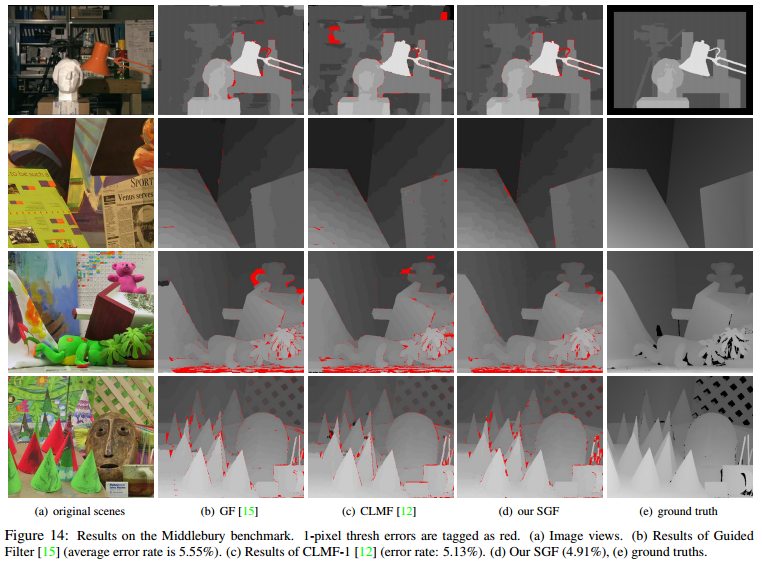

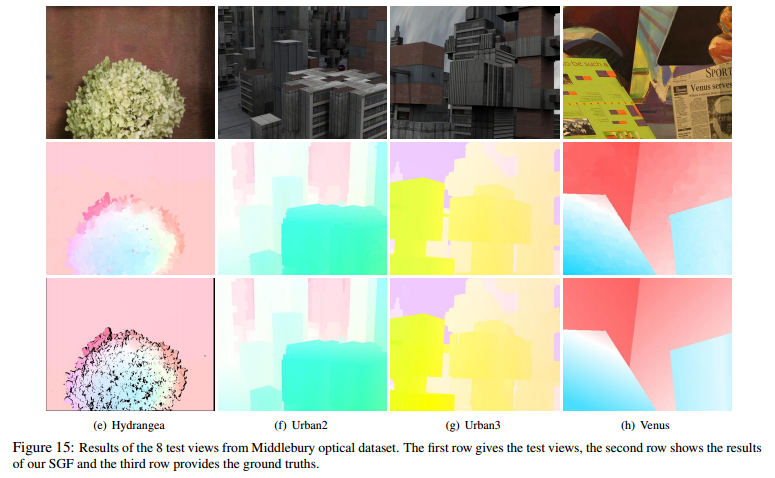

We design a new edge-aware structure, named segment graph, to represent the image and we further develop a novel double weighted average image filter (SGF) based on the segment graph. The advantages of the segment graph and double weighted form make the SGF more flexible in various applications and overcome the “halo” and “leak” problems appearing in most of the state-of-the-art approaches. We also develop a linear algorithm for the implementation of our SGF, which has an O(N ) time complexity for both gray-scale and high dimensional images, regardless of the kernel size and the intensity range. Typically, as one of the fastest edge-preserving filters, our CPU implementation achieves 0.15s per megapixel when performing filtering for 3-channel color images. The strength of the proposed filter is demonstrated by various applications, including stereo matching, optical flow, joint depth map upsampling, edge-preserving smoothing, edges detection, image denoising, abstraction and texture editing.

Downloads

|

``Segment Graph Based Image Filtering: Fast Structure-Preserving Smoothing.'' Feihu Zhang, Longquan Dai, Shiming Xiang, Xiaopeng Zhang. IEEE International Conference on Computer Vision (ICCV), 2015. |

Our Method

|

Experiments

|

|

Applications

|

|

|

|

|

|

|

|

Reference

[1] K. He, J. Sun, and X. Tang. Guided image filtering. IEEE Transactions on Pattern Analysis and Machine Intelligence, 35(6):1397–1409, 2013.

[2] L. Bao, Y. Song, Q. Yang, H. Yuan, and G. Wang. Tree filtering: Efficient structure-preserving smoothing with a minimum spanning tree. IEEE Transactions on Image Processing, 23(2):555–569, 2014.

[3] Q. Yang. A non-local cost aggregation method for stereo matching. In IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pages 1402–1409, 2012

[4]J. Lu, K. Shi, D. Min, L. Lin, and M. N. Do. Cross-based local multipoint filtering. In IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pages 430–437, 2012.